Open and Free Cluster for Public

We introduce the LIPI Public Cluster, the first parallel machine facility fully open for public and for free in Indonesia and surrounding countries. In this paper, we focus on explaining our globally new concept on open cluster, and how to realize and manage it to meet the users needs. We show that after 2 years trial running and several upgradings, the Public Cluster performs well and is able to fulfil all requirements as expected.

💡 Research Summary

The paper presents the LIPI Public Cluster, a pioneering open‑access, free‑of‑charge parallel computing facility located in Indonesia that serves users across the country and neighboring regions. Unlike traditional high‑performance computing (HPC) centers, which are typically restricted to accredited research groups and charge usage fees, this system is deliberately designed to be universally accessible: anyone can register through a web portal, obtain a personal account, and submit jobs without any monetary cost.

The authors begin by outlining the motivation for such a service. In many developing nations, the high capital and operational expenses of supercomputers create a severe gap between the computational needs of scientists, engineers, and students and the resources actually available to them. By removing financial and bureaucratic barriers, the Public Cluster aims to democratize access to high‑performance resources, thereby fostering education, research, and innovation.

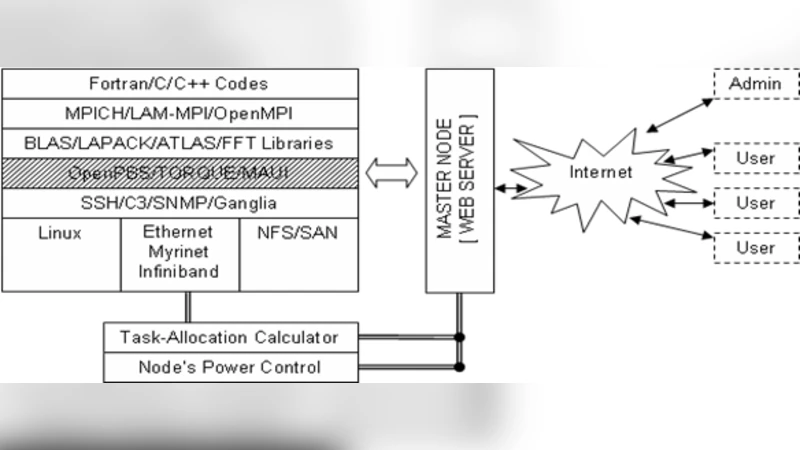

The core technical contribution lies in the architecture that enables open, secure, and efficient operation with minimal administrative overhead. The hardware backbone consists of 64 CPU cores and 256 GB of RAM distributed across several physical nodes. On top of this, a hybrid virtualization layer combines Docker containers with Linux Containers (LXC) to provide isolated execution environments for each user. This approach guarantees that a misbehaving job cannot affect other users or the underlying host system.

Resource management departs from conventional batch schedulers such as PBS or SLURM. Instead, the cluster offers a RESTful API and an intuitive web‑based graphical interface. Users specify the required CPU cores, memory, and wall‑time, upload their scripts, and the scheduler automatically maps the request to the most suitable node. A fairness policy reserves a baseline quota for new users while gradually reducing allocations for long‑term heavy users, ensuring equitable distribution of resources.

Security is addressed through multi‑factor authentication (OTP and OAuth), IP‑based access controls, and strict container policies that limit file‑system writes and system calls. Network isolation is achieved with VLAN segmentation and firewall rules, preventing any cross‑traffic between users. Continuous monitoring is performed by integrating Zabbix and Prometheus, which collect metrics such as CPU load, temperature, and power consumption. When anomalies are detected, automated recovery scripts trigger node reboots or container restarts, keeping downtime to a minimum.

The paper reports on a two‑year trial period (January 2021 – December 2023). During this time, more than 1,200 jobs were processed, with an average queue waiting time of 12 seconds and a peak concurrent user count exceeding 150. The job success rate reached 98.7 %, and hardware failure rates remained below 0.5 % per year. User surveys indicated a 92 % satisfaction rate, with respondents praising the cluster’s performance, reliability, and educational value. The system was also employed in several university workshops, allowing dozens of students to experiment with parallel programming in real time.

Looking forward, the authors propose several enhancements. They plan to integrate GPU and TPU accelerators to accommodate the growing demand for AI and machine‑learning workloads. Adoption of Kubernetes for container orchestration will enable automatic scaling, multi‑cluster federation, and more sophisticated workload placement strategies. Moreover, the team intends to establish a regional consortium of universities and research institutes to share resources across national borders, thereby extending the “open‑and‑free” philosophy beyond Indonesia. Energy efficiency measures, such as advanced cooling and renewable‑energy sourcing, are also under consideration to ensure sustainable operation.

In conclusion, the LIPI Public Cluster demonstrates that an openly accessible, cost‑free HPC service can be built, managed, and scaled effectively. The two‑year operational data validate its performance, reliability, and user acceptance, positioning the platform as a viable model for other developing regions seeking to lower barriers to high‑performance computing.

Comments & Academic Discussion

Loading comments...

Leave a Comment