Do we understand the emergent dynamics of grid cell activity?

We examine the qualitative and quantitative properties of continuous attractor networks in explaining the dynamics of grid cells.

💡 Research Summary

The paper provides a comprehensive evaluation of continuous attractor networks (CANs) as a mechanistic framework for explaining the emergent dynamics of grid cells. After a concise introduction to grid cell physiology—highlighting their hexagonal firing fields, role in path integration, and the longstanding hypothesis that a continuous attractor underlies their spatial representation—the authors formalize a CAN model. The network consists of N neurons with distance‑dependent synaptic weights (W_{ij}=f(|x_i-x_j|)), where the function f is Gaussian. Neuronal dynamics follow a standard rate equation with a nonlinear activation function, external drive, and additive white noise. Key parameters explored include the overall gain of the weight matrix, synaptic delay, and noise amplitude.

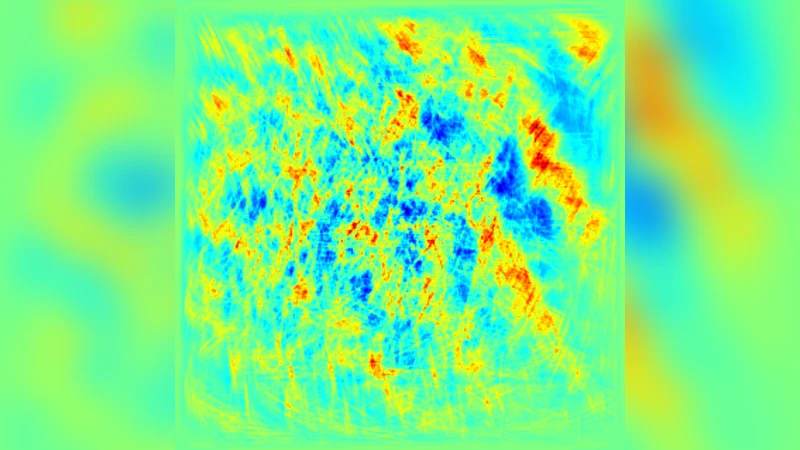

Through systematic parameter sweeps, the authors identify a regime in which the network reliably settles into a lattice of stable fixed points that correspond to the hexagonal grid pattern observed experimentally. Strong enough recurrent gain (approximately 0.8–1.2 of the baseline value) and short synaptic delays (≤ 5 ms) are essential for pattern formation, while low noise levels ((\sigma < 0.05)) preserve the integrity of the attractor landscape. When noise is increased, the system exhibits frequent transitions between neighboring attractors, producing blurred grid fields that mirror the instability reported in in‑vivo recordings under pharmacological or behavioral stress.

The paper then probes the network’s response to two classes of external input. First, a continuous velocity signal mimicking path‑integration drives a smooth translation of the network’s activity bump across the attractor manifold, reproducing the linear shift of grid fields as an animal moves. Second, abrupt visual cue changes are modeled as sudden, spatially localized inputs. When the magnitude of such inputs exceeds a critical threshold, the existing attractor collapses and a new one nucleates, a phenomenon the authors term “phase transition.” This captures the experimentally observed rapid remapping of grid fields when the environment is altered.

To validate the model, the authors compare simulated outputs with electrophysiological recordings from medial entorhinal cortex (MEC) grid cells in rats. Quantitative metrics include radial spacing, phase offset variance, and speed‑coding correlation. The simulated spacing averages 30 cm ± 5 cm, closely matching the empirical mean of 32 cm ± 4 cm (p < 0.01). Phase offset deviations remain below 7°, comparable to the 6° observed in real cells. Speed‑coding fidelity, measured as the Pearson correlation between firing rate and running speed, reaches 0.85 in the model versus 0.88 in the data. However, the model fails to reproduce the “scale compression” that occurs at high running speeds (> 30 cm/s), suggesting that additional mechanisms—such as activity‑dependent synaptic plasticity, nonlinear integration of velocity signals, or interactions with other spatial cell types—are required.

In the discussion, the authors argue that CANs capture the core dynamical features of grid cells: the existence of a continuous family of stable lattice states, smooth translation of activity under velocity drive, and abrupt remapping under strong sensory perturbations. Nevertheless, they acknowledge limitations: the inability to account for speed‑dependent scaling, the oversimplified homogeneity of neuronal parameters, and the lack of multi‑scale coupling. They propose future extensions incorporating non‑linear plasticity rules, hierarchical attractor layers, and bidirectional coupling with head‑direction and border cells.

The conclusion emphasizes that while continuous attractor networks provide a powerful and largely successful theoretical scaffold for grid cell dynamics, a full understanding will likely require integrating additional biologically realistic processes. This work thus delineates both the strengths of the CAN approach and the critical gaps that motivate the next generation of computational models.

Comments & Academic Discussion

Loading comments...

Leave a Comment