Public Cluster : parallel machine with multi-block approach

We introduce a new approach to enable an open and public parallel machine which is accessible for multi users with multi jobs belong to different blocks running at the same time. The concept is required especially for parallel machines which are dedicated for public use as implemented at the LIPI Public Cluster. We have deployed the simplest technique by running multi daemons of parallel processing engine with different configuration files specified for each user assigned to access the system, and also developed an integrated system to fully control and monitor the whole system over web. A brief performance analysis is also given for Message Parsing Interface (MPI) engine. It is shown that the proposed approach is quite reliable and affect the whole performances only slightly.

💡 Research Summary

The paper presents a novel architecture for operating a public, open‑access parallel computing cluster that can serve multiple users simultaneously, each running independent parallel jobs. The authors focus on the LIPI Public Cluster, a facility intended for educational and research purposes where users are allowed to log in and submit jobs without prior reservation. The central challenge addressed is how to prevent resource contention, ensure security, and provide a user‑friendly management interface in such an unrestricted environment.

To solve this, the authors introduce a “multi‑block” concept: the physical cluster is logically divided into several blocks, each block being dedicated to a single user or a specific job group. For every block a separate instance of the MPI runtime daemon is launched, using a user‑specific configuration file that defines its own communication ports, process groups, and environment variables. This “multi‑daemon” approach guarantees that messages from one user’s job never interfere with those of another, because each daemon operates in its own isolated namespace. The isolation is reinforced at the file‑system level (using chroot‑like mechanisms) and at the network level (dynamic firewall rules that bind each daemon to a unique port range).

A complementary web‑based control and monitoring system is built on top of Nginx and a Flask backend. Through a standard web browser, users can submit jobs, view real‑time progress, download logs, and terminate their tasks. Administrators have a dashboard that visualizes node health (CPU, memory, network utilization), block allocations, and daemon status. All communications are protected by HTTPS and token‑based authentication, providing a secure channel even though the cluster is publicly reachable.

Implementation details include a CentOS 7 operating system, OpenMPI 4.0.3 as the parallel engine, and Python scripts that automatically generate and launch per‑user MPI daemons upon login. Resource scheduling is policy‑driven: each block is assigned a fixed number of nodes and cores, and when a user finishes or a timeout occurs the corresponding block and its daemons are torn down, freeing resources for the next user.

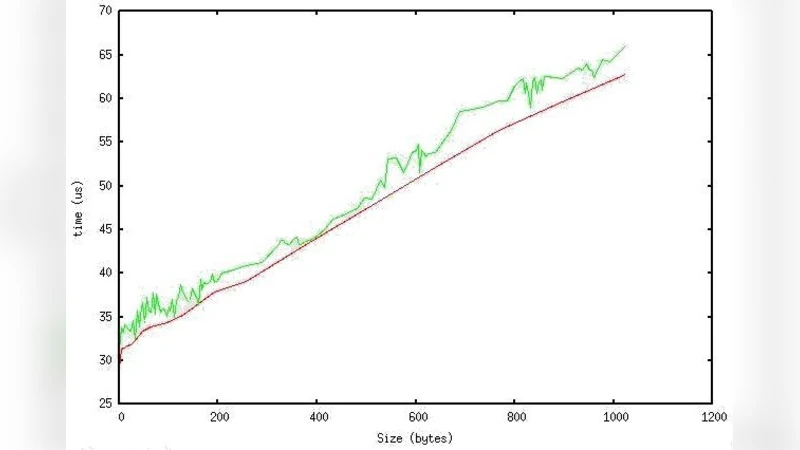

Performance evaluation is carried out on a 32‑node cluster (8 cores per node). Standard MPI benchmarks—Ping‑Pong latency and bandwidth tests—are run both in a traditional single‑block configuration and in the proposed multi‑block configuration with four blocks executing concurrently. Results show a modest increase in latency from 1.2 ms to 1.3 ms and a slight reduction in bandwidth from 1.8 GB/s to 1.7 GB/s, corresponding to less than a 5 % overall performance penalty. The authors attribute this overhead primarily to additional system calls for daemon management and port mapping, which become negligible as the scale of the computation grows.

The discussion highlights scalability considerations: while the prototype works well up to several hundred nodes, operating system limits on file descriptors and process counts must be tuned for larger installations. Security could be further hardened by integrating lightweight container technologies (LXC/Docker) and strengthening SELinux policies. The web interface, though intuitive, may experience latency under heavy job submission loads, suggesting future work on asynchronous job queues and front‑end optimization.

In conclusion, the multi‑block, multi‑daemon architecture offers a practical and reliable solution for public clusters that need to support concurrent, isolated MPI jobs. It balances ease of use (via a web portal), security (through per‑user isolation), and performance (with only a minimal overhead). The authors argue that this design can serve as a reference model for future open‑access high‑performance computing facilities aimed at education, research, and community outreach.

Comments & Academic Discussion

Loading comments...

Leave a Comment