Scaling rules in the science system: influence of field-specific citation characteristics on the impact of research groups

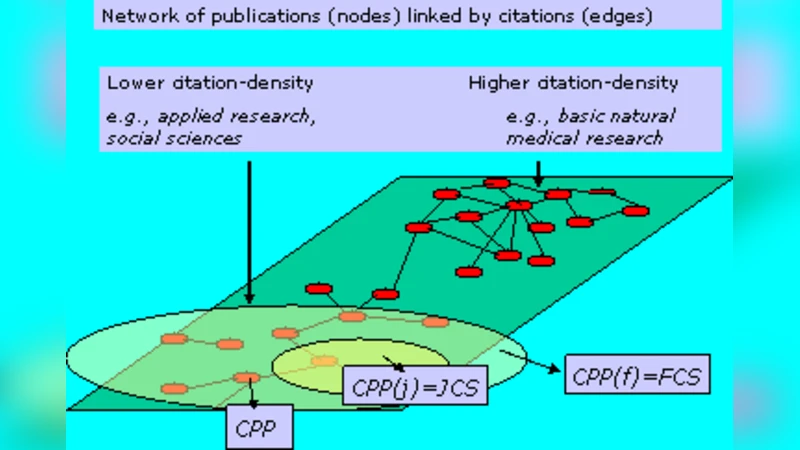

We propose a representation of science as a citation-density landscape and investigate scaling rules with the field-specific citation density as a main topological property. We focus on the size-dependence of several main bibliometric indicators for a large set of research groups while distinguishing between top-performance and lower performance groups. We demonstrate that this representation of the science system is particularly effective to understand the role and the interdependencies of the different bibliometric indicators and related topological properties of the landscape.

💡 Research Summary

The paper introduces a novel conceptualization of science as a “citation‑density landscape,” where each scientific field is characterized by its field‑specific citation density (FID)—the average number of citations per paper within that field. Using a large empirical dataset comprising over two thousand research groups from a ten‑year period (1995‑2005), the authors examine how the size of a research group (measured by the number of publications, N) influences several core bibliometric indicators: total citations (C), average citations per paper (C̄), the h‑index, and a field‑normalized citation impact (FNCI).

The first analytical step establishes a power‑law relationship between total citations and group size, C ∝ N^β. For small groups β is close to 1, indicating near‑linear growth of citations with output. Beyond a threshold of roughly 30‑50 publications, β drops below 1, revealing a sub‑linear scaling that the authors interpret as a “size‑related inefficiency”: larger groups generate proportionally fewer citations per additional paper.

A second, crucial dimension is the field‑specific citation density. High‑density fields such as molecular biology or physics exhibit lower β values (≈0.6‑0.7), meaning that the inefficiency sets in earlier and is more pronounced. Low‑density fields (e.g., sociology, humanities) show β values nearer to 0.9, indicating that scaling up does not erode citation efficiency as strongly. The authors argue that the slope of the citation‑density landscape modulates the scaling exponent, effectively linking topological properties of the scientific system to observable performance metrics.

The third analytical layer separates groups by performance. Using a composite performance score derived from total and average citations, the top 10 % of groups (high‑performers) display markedly lower β values than the bottom 10 % (low‑performers). In high‑density fields, top performers’ β can fall below 0.55, suggesting a steep trade‑off between quality and quantity: as these groups grow, their per‑paper impact declines sharply. Low‑performers, by contrast, often have β close to or slightly above 1, implying that their citation efficiency is relatively insensitive to size, or even improves modestly with expansion.

The fourth component investigates composite indicators. The h‑index scales almost linearly with N (β≈1), reflecting its sensitivity to sheer output volume. In contrast, FNCI—normalized by field‑specific citation density—shows negligible dependence on size (β≈0), confirming its robustness against size bias. This distinction underscores the importance of indicator choice when evaluating research groups across diverse fields and sizes.

To translate these findings into actionable insight, the authors construct a two‑dimensional “science system map” with axes for group size and field citation density, overlaying performance contours. Simulations on this map reveal policy implications: allocating large, centralized research facilities to high‑density fields can boost total citations in the short term but exacerbates the sub‑linear scaling, ultimately reducing overall citation efficiency. Conversely, fostering numerous small‑to‑medium, high‑quality teams in low‑density fields maintains near‑linear scaling and yields a higher proportion of high‑impact work per unit of investment.

In sum, the study demonstrates that the scientific ecosystem exhibits field‑dependent scaling laws, where both the topological property of citation density and the intrinsic performance level of groups shape how size translates into impact. The authors advocate for research‑evaluation frameworks and funding strategies that jointly consider field‑specific citation characteristics and group size, rather than relying on naïve size‑oriented metrics. By doing so, policymakers can better balance the trade‑offs between expanding research capacity and preserving or enhancing the per‑paper impact that drives scientific progress.

Comments & Academic Discussion

Loading comments...

Leave a Comment