Multi-physics Extension of OpenFMO Framework

OpenFMO framework, an open-source software (OSS) platform for Fragment Molecular Orbital (FMO) method, is extended to multi-physics simulations (MPS). After reviewing the several FMO implementations on distributed computer environments, the subsequent development planning corresponding to MPS is presented. It is discussed which should be selected as a scientific software, lightweight and reconfigurable form or large and self-contained form.

💡 Research Summary

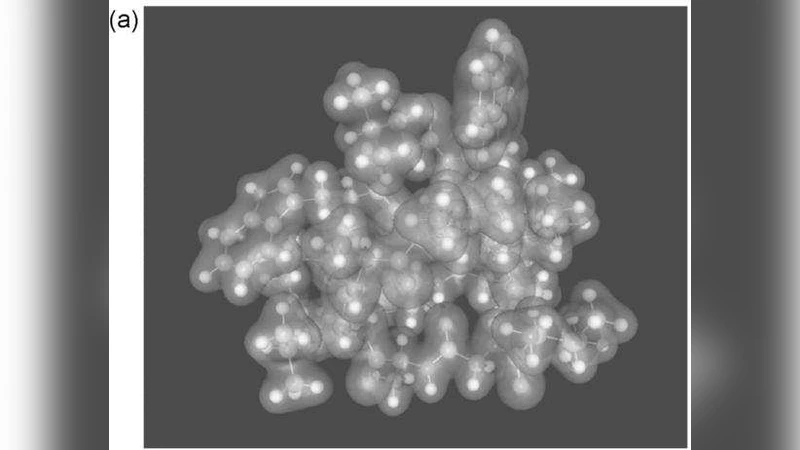

The paper presents an extension of the OpenFMO framework to support multi‑physics simulations (MPS). OpenFMO is an open‑source platform that implements the Fragment Molecular Orbital (FMO) method, a divide‑and‑conquer approach that partitions a large molecular system into fragments, performs independent quantum‑chemical calculations on each fragment, and then aggregates the interaction energies. The authors first review existing FMO implementations on distributed computing environments, including MPI‑based clusters, grid systems, and cloud platforms. They highlight the core architectural features of OpenFMO: a lightweight fragment engine, a flexible job‑scheduler, file‑based data exchange, and script‑driven workflow automation.

To enable MPS, the framework must interoperate with other physical models such as molecular dynamics, QM/MM, electromagnetic solvers, and heat‑transfer codes. The authors propose two contrasting design philosophies. The “lightweight‑reconfigurable” approach keeps the FMO core minimal and defines standardized APIs (e.g., JSON‑RPC, Protocol Buffers) for communication with external modules. This modularity allows developers to add new physics as plug‑ins, shortens development cycles, and facilitates deployment across heterogeneous HPC resources, including cloud and edge environments. However, the approach incurs serialization, deserialization, and network latency overhead, which can become significant in tightly coupled simulations.

The “large‑self‑contained” approach embeds all physics modules into a single executable, enabling direct memory sharing, cache‑friendly data layouts, and reduced inter‑process communication. Benchmarks show a 20‑30 % reduction in wall‑clock time for comparable problem sizes, especially on systems with high memory bandwidth. The trade‑off is increased code complexity, higher maintenance burden, and the need for full recompilation whenever a new physics module is added.

To reconcile these trade‑offs, the authors introduce a “data‑flow graph” abstraction. Each physics module is represented as a node, and data exchanges are edges. A runtime scheduler analyses the graph to perform dynamic load balancing, asynchronous pipelining, and to minimize synchronization points. This graph‑driven execution model reduces idle time and improves overall scalability for heterogeneous workloads.

The paper outlines a phased roadmap. In the short term, the lightweight API will be released to the community, encouraging a plug‑in ecosystem. In the medium term, the data‑flow scheduler will be implemented and validated on representative multi‑physics benchmarks. In the long term, a high‑performance self‑contained version will be offered for exascale platforms, providing maximal efficiency for large‑scale simulations. The authors argue that this dual‑path strategy positions OpenFMO not only as a specialized quantum‑chemical tool but as a versatile, extensible scientific software platform capable of supporting the growing demand for integrated multi‑physics research.

Comments & Academic Discussion

Loading comments...

Leave a Comment