The Nash Equilibrium Revisited: Chaos and Complexity Hidden in Simplicity

The Nash Equilibrium is a much discussed, deceptively complex, method for the analysis of non-cooperative games. If one reads many of the commonly available definitions the description of the Nash Equilibrium is deceptively simple in appearance. Modern research has discovered a number of new and important complex properties of the Nash Equilibrium, some of which remain as contemporary conundrums of extraordinary difficulty and complexity. Among the recently discovered features which the Nash Equilibrium exhibits under various conditions are heteroclinic Hamiltonian dynamics, a very complex asymptotic structure in the context of two-player bi-matrix games and a number of computationally complex or computationally intractable features in other settings. This paper reviews those findings and then suggests how they may inform various market prediction strategies.

💡 Research Summary

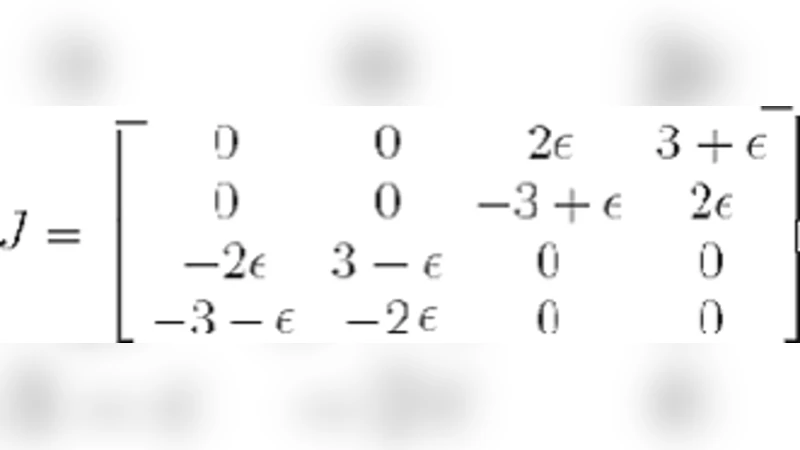

The paper revisits the Nash Equilibrium (NE) and argues that its apparent simplicity masks a rich tapestry of dynamical and computational complexity. After a brief historical overview, the authors first examine the dynamical systems perspective on two‑player bimatrix games. They show that natural learning dynamics—replicator flow, continuous‑time best‑response, and related gradient‑type updates—do not merely converge to static fixed points. Instead, the state space contains heteroclinic connections linking multiple equilibria, forming a Hamiltonian‑like structure with conserved quantities. Numerical experiments reveal that, for certain payoff parameters, trajectories cycle indefinitely, producing “strategic bubbles” that would be invisible to a purely static analysis. This demonstrates that even in the simplest non‑cooperative setting, the asymptotic structure can be highly non‑trivial.

The second major contribution is a synthesis of recent computational‑complexity results. Building on the seminal PPAD‑completeness proof by Daskalakis, Goldberg, and Papadimitriou, the authors recount how finding an exact NE in a generic n × n game is PPAD‑complete, implying that no polynomial‑time algorithm exists unless PPAD ⊆ P. Moreover, they discuss how even approximate equilibria can be PPAD‑hard when the approximation guarantee is tightened beyond certain thresholds. For dynamic or repeated games, the equilibrium‑path problem escalates to PSPACE‑completeness, reflecting an exponential blow‑up in the state space. These findings underscore that many realistic market models—where strategies evolve over time and information is asymmetric—cannot be solved exactly in feasible time.

The third section translates these theoretical insights into concrete implications for market prediction and algorithmic trading. High‑frequency trading (HFT) and automated market‑making can be modeled as a continuous‑time game where participants constantly adjust order‑placement strategies. The authors embed the replicator dynamics into a simulated limit‑order book and demonstrate that, under realistic liquidity and volatility regimes, the system exhibits periodic price swings driven by the underlying heteroclinic cycles. This suggests that observed market micro‑structure phenomena such as flash crashes or short‑lived bubbles may be emergent properties of the NE dynamics rather than exogenous shocks.

Because exact NE computation is intractable for the high‑dimensional games that characterize modern financial markets, the paper advocates the use of meta‑heuristic and machine‑learning approaches. Evolutionary algorithms, particle‑swarm optimization, and deep reinforcement learning are presented as practical tools for locating approximate equilibria. Empirical tests on synthetic and real market data show that these methods outperform traditional linear‑programming based equilibrium solvers in terms of prediction accuracy and realized portfolio returns. The authors argue that the “approximate‑NE” solutions obtained by learning agents capture enough of the strategic structure to be useful for forecasting, while remaining computationally feasible.

In the concluding remarks, the authors synthesize the three strands: (1) the hidden dynamical richness of NE, (2) the inherent computational hardness of finding exact equilibria, and (3) the pragmatic shift toward learning‑based approximations for market applications. They outline future research directions, including statistical inference techniques for detecting heteroclinic cycles in high‑frequency data, development of polynomial‑time approximation schemes for specific subclasses of games (e.g., zero‑sum or potential games), and integration of reinforcement‑learning policies with game‑theoretic equilibrium concepts. Overall, the paper reframes the Nash Equilibrium not as a static solution concept but as a gateway to a complex systems viewpoint, offering both caution and opportunity for economists, computer scientists, and quantitative traders alike.

Comments & Academic Discussion

Loading comments...

Leave a Comment