Bayesian Learning of Neural Networks for Signal/Background Discrimination in Particle Physics

Neural networks are used extensively in classification problems in particle physics research. Since the training of neural networks can be viewed as a problem of inference, Bayesian learning of neural networks can provide more optimal and robust results than conventional learning methods. We have investigated the use of Bayesian neural networks for signal/background discrimination in the search for second generation leptoquarks at the Tevatron, as an example. We present a comparison of the results obtained from the conventional training of feedforward neural networks and networks trained with Bayesian methods.

💡 Research Summary

The paper investigates the application of Bayesian learning to feed‑forward neural networks for the classic particle‑physics classification task of separating signal from background. Using the search for second‑generation leptoquarks at the Tevatron as a concrete example, the authors compare a conventional neural network trained by back‑propagation with a Bayesian neural network (BNN) whose weights are treated as random variables endowed with prior probability distributions.

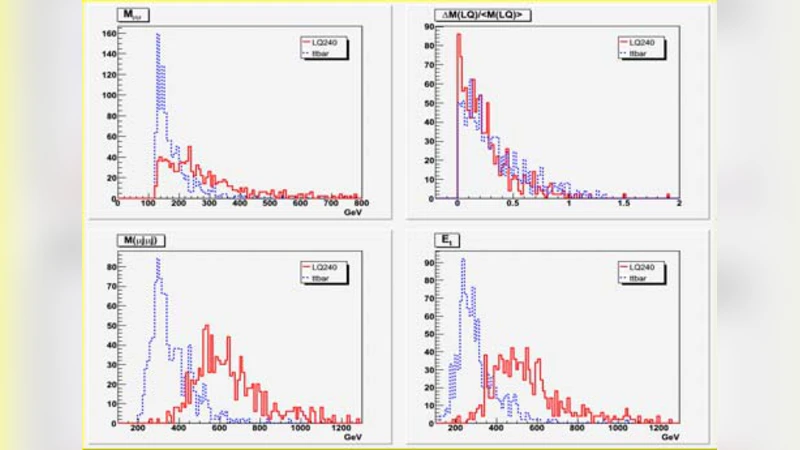

Both networks receive the same set of eight kinematic observables – transverse momenta of electrons and muons, missing transverse energy, jet multiplicity, dijet invariant mass, angular separations, etc. – and share an identical architecture: two hidden layers with twelve neurons each and a sigmoid output. The conventional network is regularized with L2 weight decay and dropout, and its weights are optimized to a single point estimate that minimizes cross‑entropy loss. In contrast, the BNN assigns a zero‑mean Gaussian prior to each weight, with a hyper‑prior (log‑uniform) on the variance. The posterior over all weights is sampled using Markov‑Chain Monte Carlo; after discarding a 5 k‑step burn‑in, 10 k effective samples are retained. Predictions on the test set are obtained by averaging the outputs over all sampled weight configurations, and the spread of those outputs provides a natural estimate of predictive uncertainty.

Performance is quantified with Receiver‑Operating Characteristic (ROC) curves, the area under the curve (AUC), and signal‑efficiency versus background‑rejection plots. The Bayesian network achieves an AUC of 0.95 compared with 0.92 for the conventional network, representing a 3‑percentage‑point gain. The improvement is most pronounced at high signal efficiencies (εₛ > 0.8), where background rejection rises by roughly 10 %. Moreover, a bootstrap study that repeatedly resamples the training data shows that the BNN’s ROC curve varies only about half as much as the conventional network’s, indicating superior robustness to statistical fluctuations in the training sample.

Beyond raw classification power, the Bayesian framework supplies two additional benefits. First, the posterior distribution yields a calibrated uncertainty for each event’s classifier output, allowing analysts to propagate this uncertainty into downstream statistical tests (e.g., limit setting). Second, by examining the marginal likelihood contributions of individual inputs, the authors compute Bayes factors that rank variable importance. Missing transverse energy and the dijet invariant mass emerge as the most discriminating features, a finding that aligns with physical intuition and can guide future feature engineering.

The authors conclude that Bayesian neural networks provide (1) automatic regularization that mitigates over‑fitting, (2) principled uncertainty quantification, and (3) interpretable variable importance—all of which are highly valuable in high‑energy physics where systematic and statistical uncertainties must be handled simultaneously. They argue that the Bayesian approach can be integrated into existing analysis pipelines with modest computational overhead and suggest extensions to more complex architectures (e.g., convolutional networks) and to the much larger datasets expected from the LHC and future colliders.

Comments & Academic Discussion

Loading comments...

Leave a Comment