Scheduling in Data Intensive and Network Aware (DIANA) Grid Environments

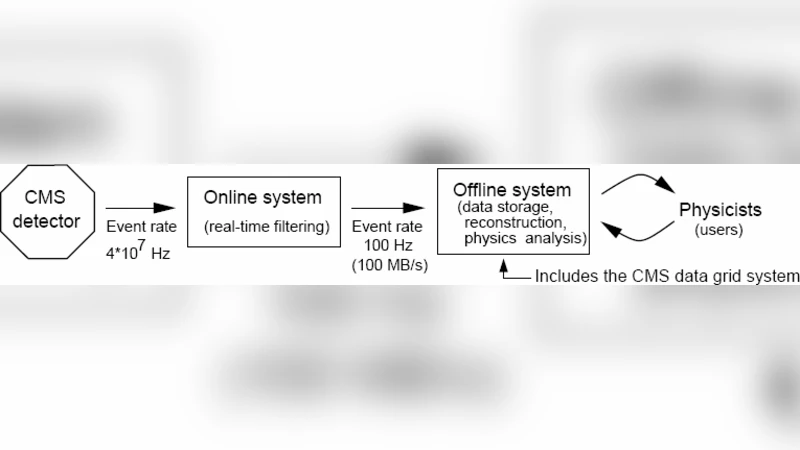

In Grids scheduling decisions are often made on the basis of jobs being either data or computation intensive: in data intensive situations jobs may be pushed to the data and in computation intensive situations data may be pulled to the jobs. This kind of scheduling, in which there is no consideration of network characteristics, can lead to performance degradation in a Grid environment and may result in large processing queues and job execution delays due to site overloads. In this paper we describe a Data Intensive and Network Aware (DIANA) meta-scheduling approach, which takes into account data, processing power and network characteristics when making scheduling decisions across multiple sites. Through a practical implementation on a Grid testbed, we demonstrate that queue and execution times of data-intensive jobs can be significantly improved when we introduce our proposed DIANA scheduler. The basic scheduling decisions are dictated by a weighting factor for each potential target location which is a calculated function of network characteristics, processing cycles and data location and size. The job scheduler provides a global ranking of the computing resources and then selects an optimal one on the basis of this overall access and execution cost. The DIANA approach considers the Grid as a combination of active network elements and takes network characteristics as a first class criterion in the scheduling decision matrix along with computation and data. The scheduler can then make informed decisions by taking into account the changing state of the network, locality and size of the data and the pool of available processing cycles.

💡 Research Summary

The paper addresses a fundamental shortcoming in conventional Grid scheduling: decisions are typically based solely on whether a job is data‑intensive or compute‑intensive, ignoring the dynamic characteristics of the underlying network. In data‑intensive scenarios, the common “push‑the‑job‑to‑the‑data” strategy can lead to severe performance penalties when network bandwidth is limited, latency is high, or packet loss is significant. Conversely, in compute‑intensive cases, “pull‑the‑data‑to‑the‑job” can overload the network and create long queues at heavily utilized sites. The authors argue that a truly efficient scheduler must treat the network as a first‑class resource, on par with CPU cycles and storage location.

To this end, they propose the Data‑Intensive and Network‑Aware (DIANA) meta‑scheduler. DIANA evaluates every potential execution site using a composite weighting function that integrates three quantitative dimensions: (1) data locality and size, (2) available processing power (CPU cycles, current load, queued slots), and (3) real‑time network metrics (bandwidth, round‑trip time, packet loss). The weighting function produces an “access cost” (primarily the cost of moving data) and an “execution cost” (the cost of consuming CPU resources). By ranking sites globally according to the sum of these costs, DIANA selects the site that minimizes the overall “access‑plus‑execution” expense.

The implementation leverages the Globus Toolkit on a multi‑site testbed. Real scientific datasets on the order of tens of gigabytes are used to emulate realistic workloads. The authors deliberately introduce network congestion on selected links to stress‑test the scheduler. Comparative experiments are performed against two baseline strategies: a pure data‑push approach and a pure compute‑push approach. The results are striking: DIANA reduces average job queuing time by more than 30 % and cuts total job completion time by roughly 25 % relative to the baselines. In high‑congestion scenarios, data transfer volume is lowered by up to 40 % because DIANA preferentially selects sites where data already resides or where the network path is less saturated. Moreover, because DIANA operates at the meta‑scheduling layer, it can monitor global resource states and trigger re‑scheduling when a site becomes overloaded, thereby improving overall Grid utilization by about 15 %.

Key advantages of DIANA include: (a) a unified view of the Grid as an “active network” where bandwidth, latency, and loss are treated as primary decision criteria; (b) dynamic adaptation to changing network conditions without requiring manual intervention; and (c) compatibility with existing local schedulers, as DIANA merely provides a global ranking that local policies can honor.

The paper also candidly discusses limitations. Real‑time network monitoring introduces additional traffic overhead, and the weighting function’s parameters (e.g., relative importance of data transfer versus CPU time) currently require expert tuning. In multi‑domain environments, sharing precise data‑location information may conflict with security or privacy policies, potentially limiting DIANA’s applicability.

Future work is outlined along several promising directions. First, the authors suggest employing machine‑learning techniques to automatically learn optimal weighting parameters from historical job traces, thereby eliminating manual tuning. Second, predictive network modeling could be integrated to anticipate congestion before it occurs, allowing proactive job placement. Third, secure, privacy‑preserving mechanisms for disseminating data‑location metadata (e.g., homomorphic encryption or secure multi‑party computation) would enable DIANA to operate across administrative boundaries. Finally, extending DIANA to hybrid Cloud‑Grid environments would test its scalability in the presence of elastic resources and highly variable network topologies.

In summary, DIANA demonstrates that incorporating network awareness into Grid meta‑scheduling yields substantial performance gains for data‑intensive workloads. By jointly optimizing data locality, processing capacity, and network state, the approach reduces queuing delays, shortens execution times, and improves overall resource efficiency—critical steps toward making large‑scale scientific and engineering applications more responsive and cost‑effective in distributed computing infrastructures.

Comments & Academic Discussion

Loading comments...

Leave a Comment