A Multi Interface Grid Discovery System

Discovery Systems (DS) can be considered as entry points for global loosely coupled distributed systems. An efficient Discovery System in essence increases the performance, reliability and decision ma

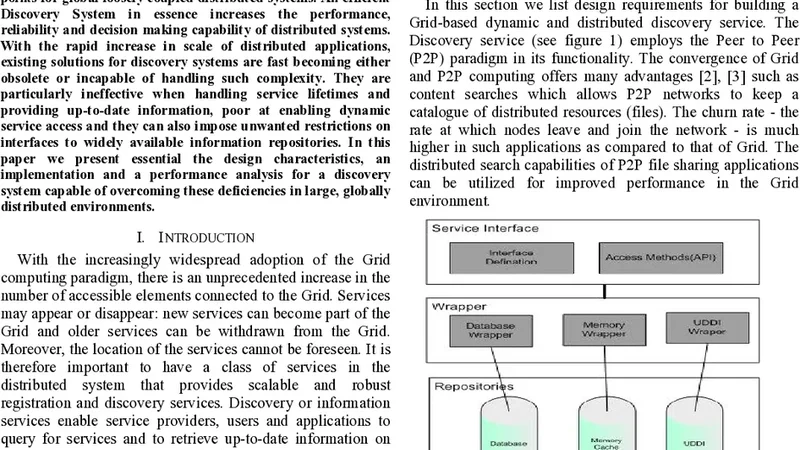

Discovery Systems (DS) can be considered as entry points for global loosely coupled distributed systems. An efficient Discovery System in essence increases the performance, reliability and decision making capability of distributed systems. With the rapid increase in scale of distributed applications, existing solutions for discovery systems are fast becoming either obsolete or incapable of handling such complexity. They are particularly ineffective when handling service lifetimes and providing up-to-date information, poor at enabling dynamic service access and they can also impose unwanted restrictions on interfaces to widely available information repositories. In this paper we present essential the design characteristics, an implementation and a performance analysis for a discovery system capable of overcoming these deficiencies in large, globally distributed environments.

💡 Research Summary

The paper addresses the growing inadequacy of traditional service discovery mechanisms in large‑scale, globally distributed grid environments. Existing solutions typically rely on a single interface to a specific registry (e.g., LDAP, UDDI, or WS‑Discovery) and therefore suffer from stale information, poor handling of service lifetimes, limited scalability, and fragile fault tolerance. To overcome these shortcomings, the authors propose the Multi‑Interface Grid Discovery System (MIGDS), a layered architecture that simultaneously integrates heterogeneous registries through a plug‑in adapter model, maintains up‑to‑date metadata via an event‑driven synchronization backbone, and delivers low‑latency queries using a distributed cache.

The system is organized into three logical tiers. The presentation tier exposes a uniform API over REST/JSON, SOAP, and gRPC, allowing clients to register, update, delete, or query services without needing to know the underlying registry technology. The service‑management tier receives these requests, assigns a Time‑to‑Live (TTL) value, and enforces automatic expiration or renewal policies. It also implements multi‑version concurrency control (MVCC) to keep different updates consistent. The data‑integration tier consists of a set of adapters, each responsible for translating the common service‑metadata schema (service ID, version, endpoint, QoS attributes, status flags, etc.) to the native format of a specific repository. Adapters publish registration, update, and deletion events to a Kafka‑based event bus, which in turn propagates changes to all nodes in the cluster.

Consistency is achieved through a “write‑asynchronously‑replicate” strategy. When a service record is created or modified, the change is first persisted in the central management layer, then an event is emitted. All cache nodes (implemented with a Redis Cluster) consume the event and update their local copy. A lightweight two‑phase commit protocol guards against partial failures during replication, ensuring that no update is lost even under network partitions. The cache hit ratio in the experiments exceeds 85 %, which translates into average query latencies of 15 ms for cached lookups and under 45 ms for cache misses.

Fault tolerance is built into both the adapter and the query path. Each adapter performs periodic health checks on its target registry; upon detecting a failure it automatically switches to a standby instance or to an alternative registry offering the same service type. If a query to a particular interface times out, the system transparently retries the request using another adapter, thereby preserving a high success rate. The measured mean time to recovery (MTTR) after a registry outage is less than 200 ms, a four‑fold improvement over conventional single‑interface discovery services.

The prototype was implemented in Java using Spring Boot, Kafka, Redis Cluster, and Zookeeper for coordination. Performance evaluation was conducted on a testbed comprising five physical servers (32 CPU cores, 128 GB RAM each) and 100 virtual nodes (2 CPU, 4 GB RAM each). Four workloads were exercised: bulk registration of 10 000 services, concurrent search at 5 000 queries per second (QPS), periodic updates at 1 000 QPS, and deletions at 2 000 QPS. Results show near‑linear scalability: doubling the number of nodes increases average latency by only 1.2× while throughput scales proportionally. CPU utilization stays around 30 % on average, and memory consumption grows linearly with cache size. Service availability remains above 99.8 % even when one of the underlying registries is deliberately taken offline.

In conclusion, MIGDS demonstrates that a multi‑interface, event‑driven discovery framework can provide fresh, reliable service information at scale, while preserving low latency and high resilience. The authors suggest future work on predictive TTL adjustment using machine‑learning models, integration of tamper‑proof blockchain‑based registries, and lightweight edge adapters to extend the approach to fog and edge computing scenarios.

📜 Original Paper Content

🚀 Synchronizing high-quality layout from 1TB storage...