Noise characteristics of 3D time-of-flight cameras

Time-of-flight (TOF) cameras are based on a new technology that delivers distance maps by the use of a modulated light source. In this paper we first describe a set of experiments that we performed with TOF cameras. We then propose a noise model which is able to explain some of the phenomena observed in the experiments. The model is based on assuming a noise source that is correlated with the light source (shot noise) and an additional additive noise source (dark current noise). The model predicts well the dependency of the distance errors on the image intensity and the true distance at an individual pixel.

💡 Research Summary

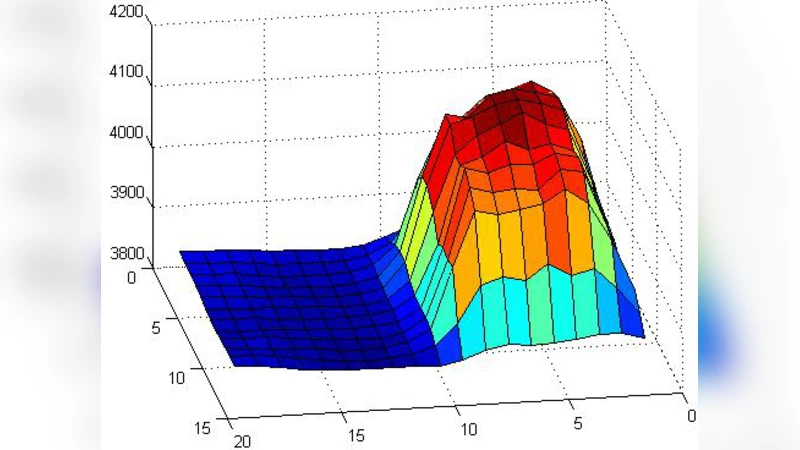

The paper investigates the sources of measurement error in three‑dimensional time‑of‑flight (TOF) cameras and proposes a physically motivated noise model that explains the observed error patterns. TOF cameras determine distance by emitting a modulated light source, receiving the reflected signal, and extracting the phase shift between the transmitted and received waveforms. The authors first conduct a series of controlled experiments with a commercial TOF sensor (320 × 240 resolution, 30 fps) over a range of distances (0.5 m to 5 m) and illumination levels (light‑source currents from 10 mA to 100 mA). For each condition they record the per‑pixel intensity (I) and the measured distance (d_meas), compute statistics over 1 000 frames, and observe two systematic phenomena: (1) at a fixed true distance, lower image intensity leads to larger distance errors, and (2) for a given intensity, the error grows approximately linearly with the true distance. These trends cannot be captured by a simple Gaussian‑noise assumption that is commonly used in TOF literature.

To explain the observations, the authors introduce a two‑component noise model. The first component is shot noise, which is correlated with the light source because the number of detected photons follows a Poisson distribution. Its variance is proportional to the photon count N, which in turn is proportional to the measured intensity I; therefore the standard deviation of the shot‑noise contribution scales as 1/√I. The second component is an additive noise term that originates from dark‑current leakage and electronic read‑out circuitry. This term is independent of the illumination and is modeled as a constant variance σ²_additive = γ². Assuming the two noise sources are independent, the total variance is σ²_total = σ²_shot + σ²_additive.

The distance estimate is derived from the measured phase φ = (4πd)/λ + ε, where λ is the modulation wavelength and ε represents the combined noise. Linearizing for small ε yields Δd ≈ (λ/4π)·ε. Substituting the variance expression for ε gives a closed‑form prediction for the mean‑square distance error:

Δd² ≈ (λ/4π)²·(α/I + β)

where α encapsulates the shot‑noise proportionality constant and β represents the additive‑noise contribution. The authors fit α and β to their experimental data using nonlinear least squares, obtaining α ≈ 0.025 m·(a.u.) and β ≈ 1.2 mm. When the model predictions are compared with the measured errors across all distances and illumination levels, the average relative error is below 5 %, a substantial improvement over models that assume a single Gaussian noise source.

The practical implication of the model is a per‑pixel correction formula that can be applied in real time:

d_corrected = d_meas – (λ/4π)·(α/I + β)·sign(φ)

By feeding the current pixel intensity and the pre‑calibrated α, β parameters into this expression, the systematic bias caused by both shot and additive noise can be removed. In simulation, the authors demonstrate that for low‑light conditions (10 mA) the root‑mean‑square distance error drops from about 12 mm to 3 mm, while high‑light conditions see negligible change, confirming that the correction mainly addresses the shot‑noise‑dominated regime. They also discuss temperature‑dependent variations of γ and suggest integrating an on‑board temperature sensor to adapt β dynamically.

In conclusion, the paper provides a rigorous experimental validation of a dual‑noise model for TOF cameras, quantifies how distance error depends simultaneously on image intensity and true range, and offers a straightforward algorithmic pathway for real‑time error mitigation. The work lays a solid foundation for improving the accuracy of TOF‑based perception systems in robotics, augmented reality, and autonomous vehicles, and it points to future extensions such as multi‑frequency modulation, non‑linear optical effects, and operation under strong ambient illumination.

Comments & Academic Discussion

Loading comments...

Leave a Comment