Bayesian Separation of Document Images with Hidden Markov Model

this paper we consider the problem of separating noisy instantaneous linear mixtures of document images in the Bayesian framework. The source image is modeled hierarchically by a latent labeling process representing the common classifications of document objects among different color channels and the intensity process of pixels given the class labels. A Potts Markov random field is used to model regional regularity of the classification labels inside object regions. Local dependency between neighboring pixels can also be accounted by smoothness constraint on their intensities. Within the Bayesian approach, all unknowns including the source, the classification, the mixing coefficients and the distribution parameters of these variables are estimated from their posterior laws. The corresponding Bayesian computations are done by MCMC sampling algorithm. Results from experiments on synthetic and real image mixtures are presented to illustrate the performance of the proposed method.

💡 Research Summary

The paper tackles the problem of separating noisy, instantaneous linear mixtures of document images within a Bayesian framework. The authors propose a hierarchical probabilistic model that treats each source image as two coupled stochastic layers. The first layer is a latent labeling field that assigns each pixel to a semantic class (e.g., text, background, graphics). This labeling field is modeled by a Potts Markov random field, which enforces spatial regularity by encouraging neighboring pixels to share the same label, thereby capturing the homogeneous regions typical of document objects. The second layer models the pixel intensities conditioned on the class labels. For each class, a Gaussian (or Gaussian mixture) distribution is assumed, and a smoothness constraint on neighboring intensities is incorporated to reflect local continuity and to mitigate noise.

The observed mixed images are assumed to be generated by an instantaneous linear mixing process: Y = A·X + ε, where Y denotes the observed multi‑channel image, X the latent source intensities, A the unknown mixing matrix, and ε additive Gaussian noise. All unknown quantities—including the labeling field, the source intensities, the mixing coefficients, and the hyper‑parameters of the class‑specific intensity distributions—are treated as random variables with appropriate priors (Potts parameter β, normal‑inverse‑Gamma priors for class means and variances, normal prior for A, inverse‑Gamma prior for noise variance).

Because the joint posterior distribution is analytically intractable, the authors resort to Markov chain Monte Carlo (MCMC) sampling. A Gibbs sampler is constructed that iteratively updates: (1) the labeling field via a conditional Potts distribution (using Metropolis‑Hastings or Swendsen‑Wang style moves), (2) class‑specific intensity parameters from their conjugate normal‑inverse‑Gamma posteriors, (3) the source intensities from a Gaussian conditional posterior given current labels and mixing matrix, (4) the mixing matrix from a Gaussian posterior derived from the linear regression formulation, and (5) the noise variance from an inverse‑Gamma posterior. This cycle yields samples from the full posterior, allowing point estimates (e.g., posterior means) and uncertainty quantification for all variables.

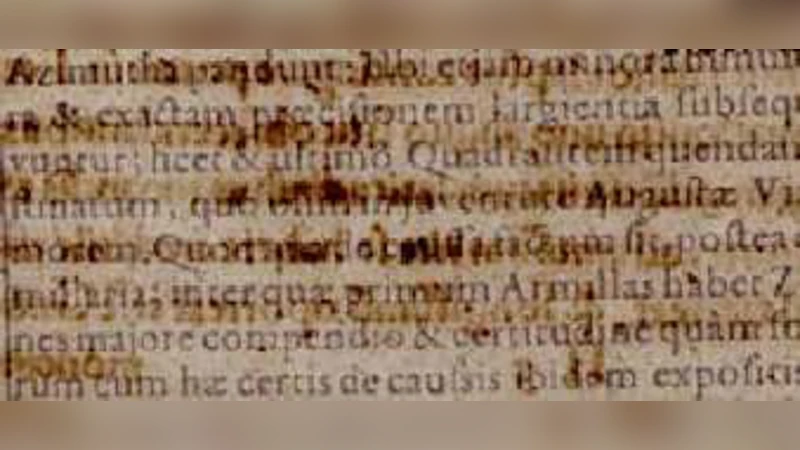

Experimental validation is performed on both synthetic mixtures, where ground‑truth labels and mixing matrices are known, and on real scanned documents with complex color mixing. Quantitative metrics such as peak signal‑to‑noise ratio (PSNR), structural similarity index (SSIM), and label classification accuracy demonstrate that the proposed Bayesian method outperforms conventional independent component analysis (ICA) and simpler Bayesian separation techniques by several decibels in PSNR and by a noticeable margin in label accuracy, especially under low SNR conditions. Visual results on real documents show clean separation of text from background and graphics, with the Potts‑based labeling preserving coherent object boundaries even in heavily noisy regions. Sensitivity analysis indicates that the algorithm is robust to the choice of hyper‑parameters, converging reliably after a moderate number of MCMC iterations.

In summary, the paper introduces a comprehensive Bayesian solution for document image separation that explicitly models class labels, leverages spatial regularity through a Potts field, and integrates intensity smoothness. The use of full posterior inference via MCMC enables simultaneous estimation of sources, mixing coefficients, and model parameters, delivering superior performance on both synthetic and real data. The authors suggest future extensions to nonlinear mixing models, multi‑scale hierarchical labeling, and variational inference schemes for faster, possibly real‑time, deployment.

Comments & Academic Discussion

Loading comments...

Leave a Comment