Supervised Feature Selection via Dependence Estimation

We introduce a framework for filtering features that employs the Hilbert-Schmidt Independence Criterion (HSIC) as a measure of dependence between the features and the labels. The key idea is that good features should maximise such dependence. Feature selection for various supervised learning problems (including classification and regression) is unified under this framework, and the solutions can be approximated using a backward-elimination algorithm. We demonstrate the usefulness of our method on both artificial and real world datasets.

💡 Research Summary

The paper proposes a unified framework for supervised feature selection that leverages the Hilbert‑Schmidt Independence Criterion (HSIC) as a measure of statistical dependence between a set of input features and the target labels. HSIC quantifies the degree of nonlinear dependence by embedding the variables into reproducing kernel Hilbert spaces via kernel functions (the authors primarily use Gaussian kernels) and computing the squared Hilbert‑Schmidt norm of the cross‑covariance operator. A value of zero indicates independence, while larger values reflect stronger dependence. The central hypothesis is that a good feature subset should maximize HSIC with the labels, thereby preserving the most predictive information.

Because exhaustively searching all 2^p possible subsets is computationally infeasible, the authors adopt a backward‑elimination strategy. Starting with the full feature set, at each iteration they evaluate the decrease in HSIC that would result from removing each remaining feature. The feature whose removal causes the smallest HSIC drop is eliminated. This process repeats until removing any additional feature would reduce HSIC, yielding a compact subset that retains maximal label dependence. The algorithm’s computational cost is dominated by repeated HSIC evaluations, which scale as O(p²n) per iteration (p = current number of features, n = number of samples). To keep the method practical, the paper discusses kernel matrix reuse, low‑rank approximations (e.g., Nyström), and efficient trace computations.

The framework is model‑agnostic: once a feature subset is selected, any downstream learner—linear models, kernel SVMs, random forests, or deep neural networks—can be trained on it. This contrasts with many existing methods that are tied to a specific classifier or rely on linear assumptions.

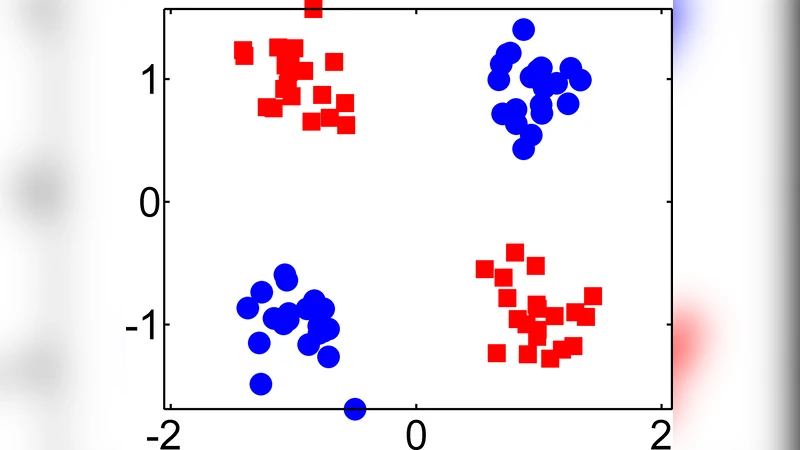

Empirical evaluation is conducted on synthetic data designed to exhibit complex, nonlinear class boundaries, as well as on a suite of real‑world benchmarks from the UCI repository, image classification, and text categorization tasks. Baselines include Mutual Information, ReliefF, L1‑regularized logistic regression, and recent deep‑learning‑based importance measures. Across all experiments, the HSIC‑based selector consistently achieves higher test accuracy (or lower RMSE for regression) while selecting far fewer features—typically 10–30 % of the original dimensionality. Moreover, the reduced feature sets lead to faster training times and lower memory consumption, highlighting the practical benefits of the approach.

The authors acknowledge two main limitations. First, HSIC depends on kernel hyper‑parameters (especially the bandwidth of the Gaussian kernel); suboptimal choices can degrade performance, and the current solution relies on cross‑validation. Second, HSIC estimates can be biased in small‑sample regimes, potentially leading to unstable selections. They suggest future work on multi‑kernel aggregation, Bayesian optimization of kernel parameters, bias‑corrected estimators, and hybrid forward‑backward strategies to further improve robustness.

In summary, the paper introduces a theoretically sound, non‑parametric, and computationally tractable method for supervised feature selection. By directly maximizing a principled dependence measure, it unifies classification and regression scenarios, delivers consistent empirical gains, and offers a flexible preprocessing tool that can be paired with any predictive model. The work opens avenues for extending dependence‑based selection to large‑scale, distributed settings and for integrating richer kernel families to capture diverse data structures.

Comments & Academic Discussion

Loading comments...

Leave a Comment