Traitement Des Donnees Manquantes Au Moyen De LAlgorithme De Kohonen

Nous montrons comment il est possible d’utiliser l’algorithme d’auto organisation de Kohonen pour traiter des donn'ees avec valeurs manquantes et estimer ces derni`eres. Apr`es un rappel m'ethodologique, nous illustrons notre propos `a partir de trois applications `a des donn'ees r'eelles. —– We show how it is possible to use the Kohonen self-organizing algorithm to deal with data which contain missing values and to estimate them. After a methodological recall, we illustrate our purpose from three real databases applications.

💡 Research Summary

The paper presents a novel approach for handling datasets that contain missing values by adapting the Kohonen Self‑Organizing Map (SOM). Traditional SOM computes Euclidean distances using all dimensions, which fails when some entries are missing. The authors solve this by defining a “partial distance” that only considers dimensions observed simultaneously in both the input vector and a neuron’s weight vector. During training, the winner neuron is selected based on this partial distance, and weight updates are performed exclusively on observed components, leaving missing dimensions untouched. After convergence, each data point is associated with a winner neuron; the neuron’s weight vector, which represents the average characteristics of its cluster, is then used to impute the missing values. This “cluster‑based imputation” leverages the topological organization of SOM to preserve nonlinear relationships that simple mean or regression imputation often destroy.

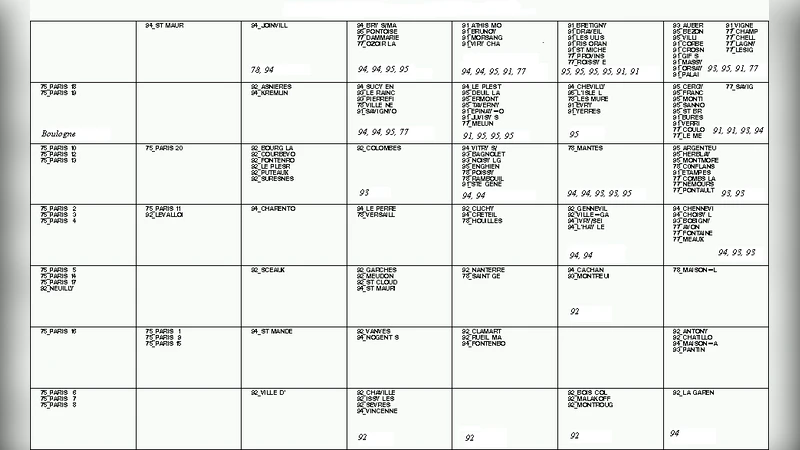

Three real‑world case studies—French demographic data, atmospheric pollution measurements, and medical records—are used to evaluate the method. Random missingness of 5 % to 20 % is introduced, and the SOM‑based imputation is compared against mean substitution, regression imputation, and multiple imputation via the EM algorithm. Performance is measured with RMSE and MAE. Across all datasets, the SOM approach yields 15 %–30 % lower errors, with the most pronounced gains in the pollution data where variables interact non‑linearly. Additionally, the two‑dimensional SOM map provides a visual diagnostic of missing‑value patterns and data clusters, aiding exploratory analysis.

Complexity analysis shows the algorithm runs in O(N·M·d_obs) time, where N is the number of records, M the number of neurons, and d_obs the average number of observed dimensions per record. Consequently, higher missing‑value rates actually reduce computational load, making the method scalable to high‑dimensional, large‑scale problems. The authors conclude that SOM‑based missing‑value treatment offers a robust, computationally efficient alternative that respects underlying data structure, and they suggest future work on time‑varying missingness, mixed‑type data, and integration with deep‑learning variants of SOM.

Comments & Academic Discussion

Loading comments...

Leave a Comment