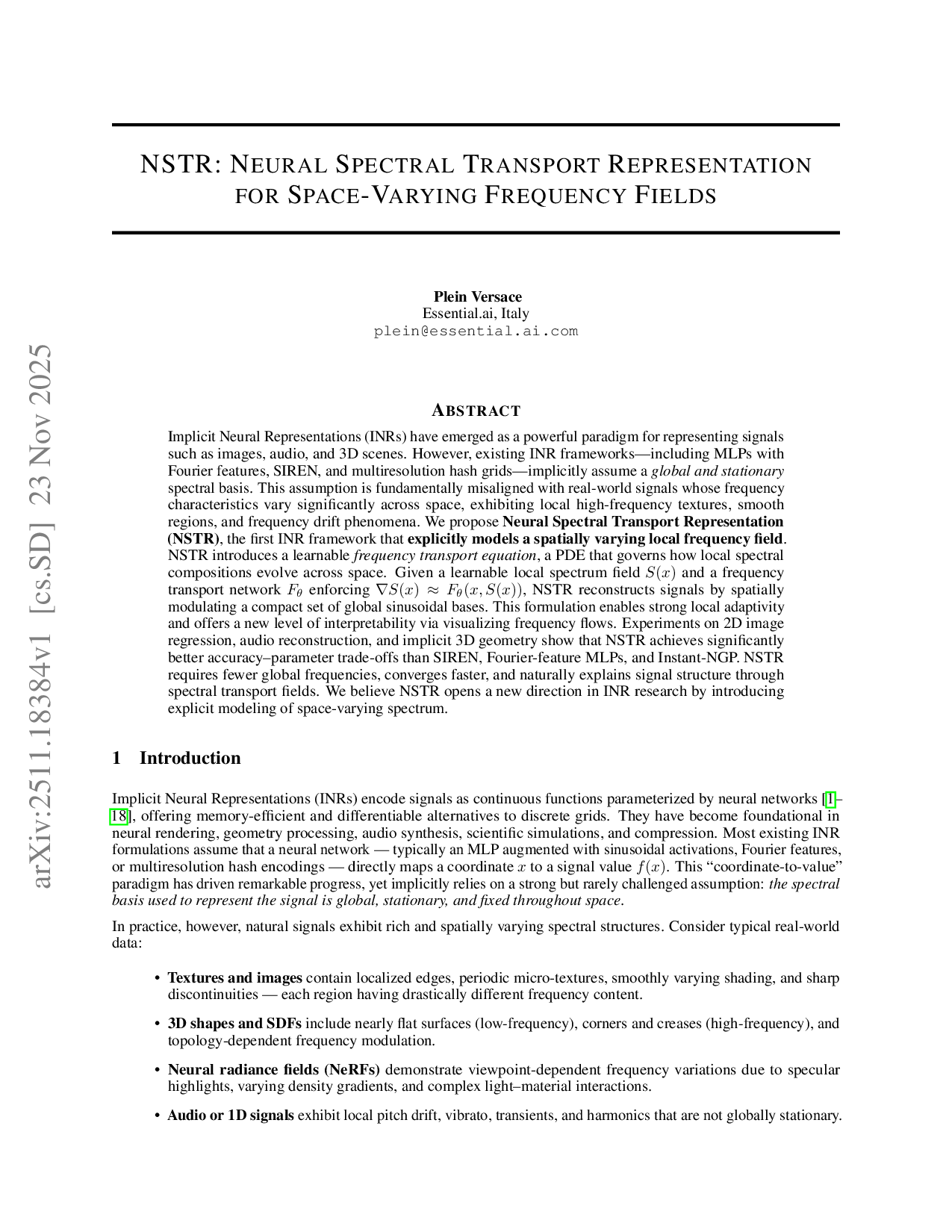

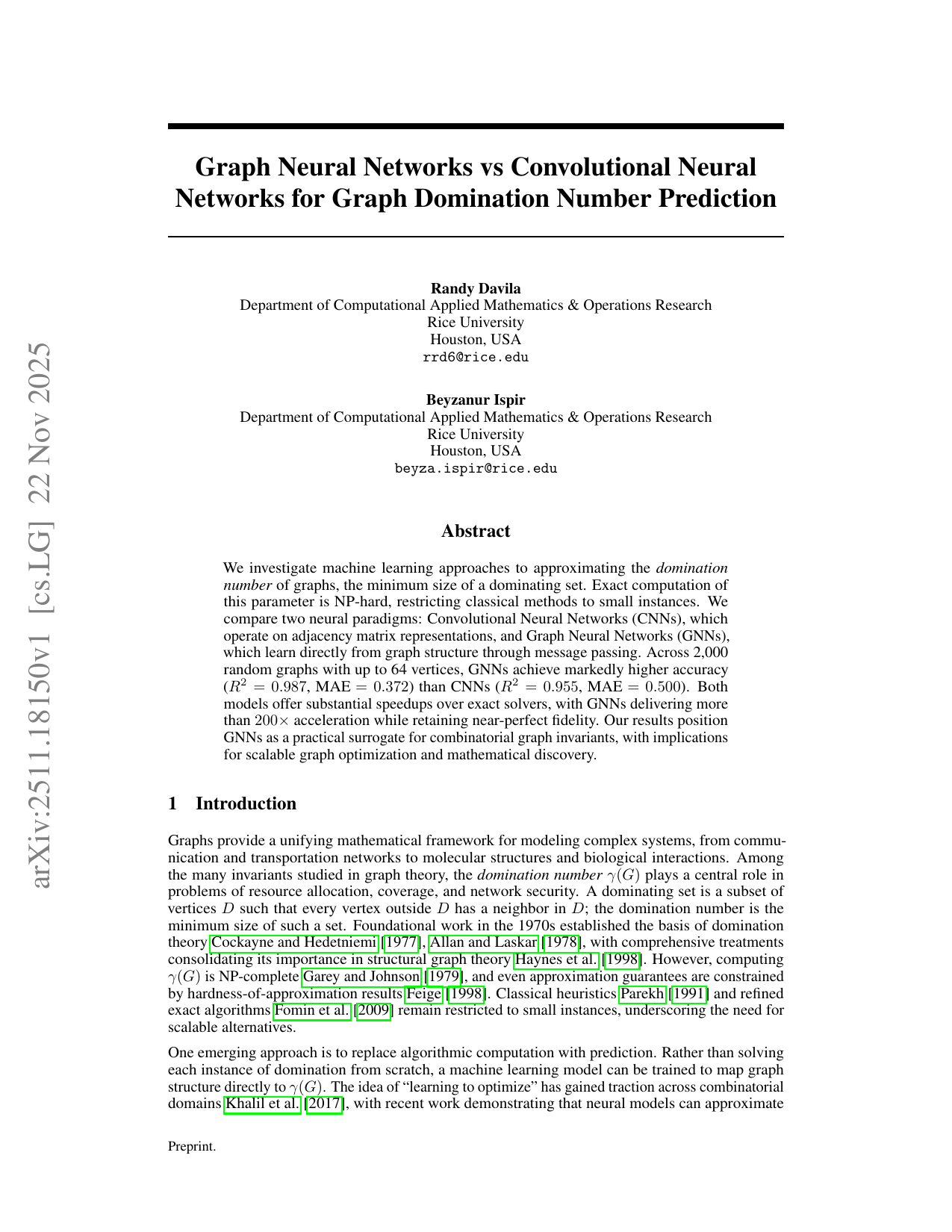

Vision Transformer MLP 용량 절감이 성능 향상을 이끈다

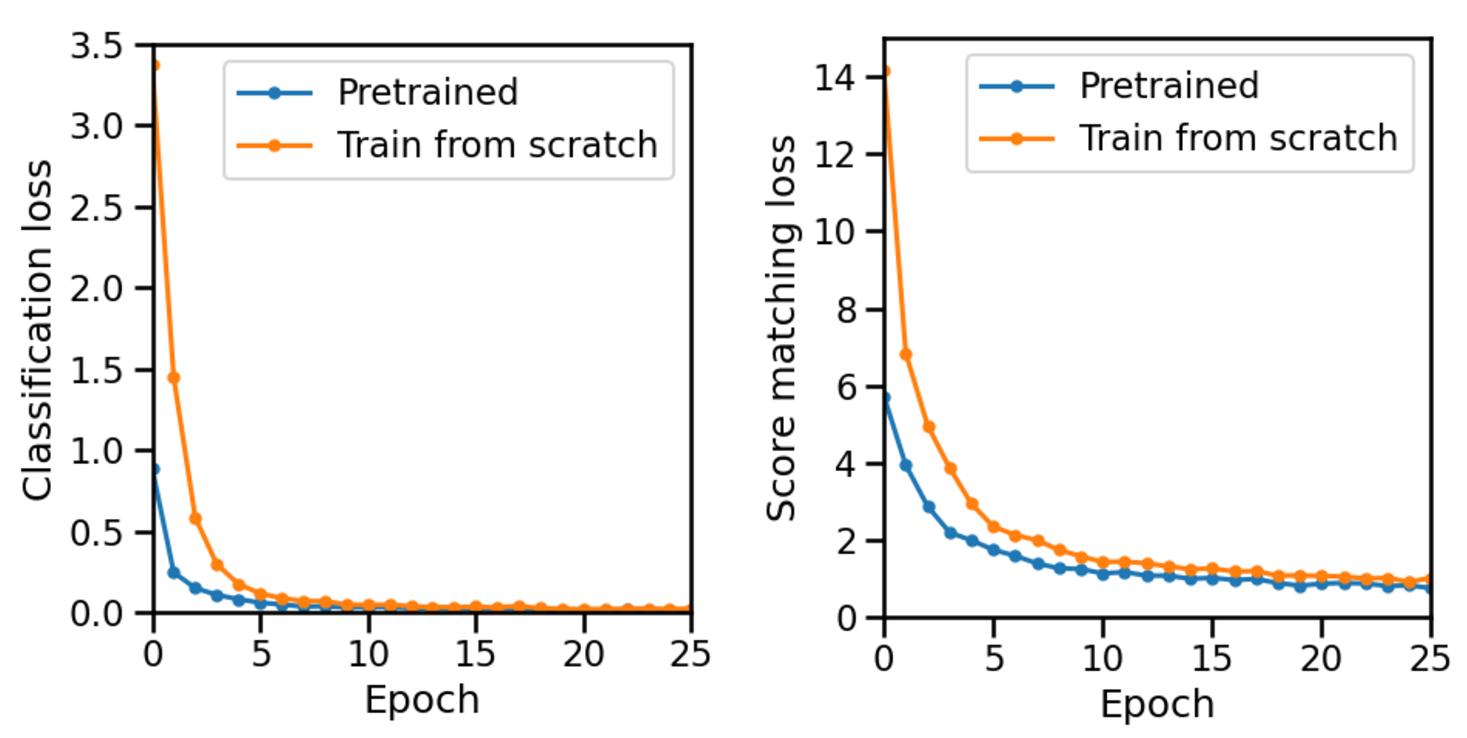

Although scaling laws and many empirical results suggest that increasing the size of Vision Transformers often improves performance, model accuracy and training behavior are not always monotonically increasing with scale. Focusing on ViT-B/16 trained